A method to validate strategy developed by Spark No. 9.

Most companies are good at building products. The harder problem is knowing whether a product will land before you launch it. To mitigate this problem, companies run surveys, commission focus groups, and increasingly turn to AI-generated personas to get at least some type of signal before they go to market. The problem is that all of those methods are based on people’s opinions and predictions of future behavior, not on behavior itself.

This is the Say Vs. Do Gap: the distance between what consumers say they'll do in research settings and what they actually do when it's time to make a real decision in real life. ‘Say’ data has never been a reliable predictor of ‘Do’ data. Spark No. 9 developed heat-testing, research grounded in observed behavior rather than stated preferences, to bridge that gap and produce decision-grade evidence.

Most market research methods share a fundamental flaw: they ask people to predict their own behavior. Surveys, focus groups, and AI-generated personas all generate ‘Say’ data — responses shaped by social pressure, faulty self-knowledge, and the artificial context of being asked.

The Say Vs. Do Gap is the systematic difference between what people say they'll do and what they actually do when encountering a product in the real world, with no moderator, no prompt, and real stakes. It is the reason why promising survey results so often fail to translate into sales and products fail at launch.

Heat-testing is designed to bridge this gap by generating ‘Do’ data instead.

Heat-testing is a market research and product validation methodology developed by Spark No. 9 and published in Harvard Business Review. It generates behavioral data by putting real strategies in front of real audiences in the real world and measuring what they actually do. The result is a data-driven picture of where your market opportunity actually is — which is often a different place than where you assumed it would be.

For a new launch, that means knowing which audience and ad combination gives you the strongest foundation before you commit. For repositioning, it means finding new demand without risking the customer base you've already built.

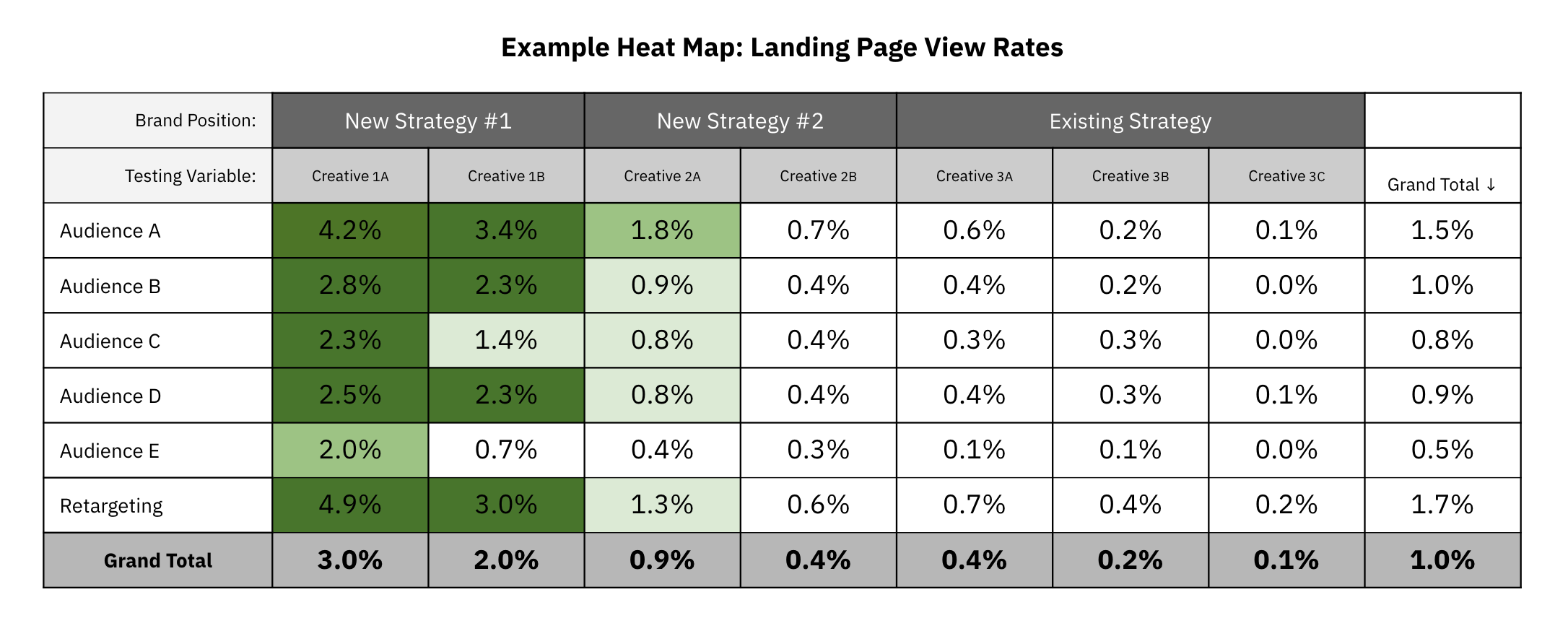

Distinct strategies are developed and tested in the real world with live ads targeting multiple audiences. A sample of every audience sees every ad and an equal amount of budget is allocated to do so, creating a true apples-to-apples comparison. The test doesn't require a large spend, only enough to reach statistical significance. What comes back is decision-grade evidence — real audiences making real choices.

A heat test is designed to answer the questions that opinion-based research tends to get wrong. Which audience actually responds in the real world and with statistical validity? What specific strategy or framing drives real behavior? Across all the audience and strategy combinations you test, where is demand strongest? Surveys, consumer panels, focus groups, and other conventional research cannot predict real-world behavior with statistical confidence; instead, they rely on interpretation, which reduces their usefulness in reducing risk around major decisions like product launches and rebranding.

The heat map generated by heat-testing makes patterns visible quickly. Certain combinations of audience and ad generate strong response, while others generate almost none. The combinations with the highest activity tell you not just that demand exists, but with whom it exists and the strategy that unlocked it. This specificity—and the fact that experiments take place via live ad campaigns—makes heat-testing instantly actionable in a way that traditional validation methods are not.

The methodology is not tied to any single stage of the product lifecycle. Companies use it before a launch, after a launch, and anywhere in between.

Spark No. 9 introduced heat-testing 14 years ago. In December 2022, it was presented to a global business audience through a feature in Harvard Business Review, recognized as a substantive alternative to the market research approaches most companies default to, and widely shared among product and marketing leaders navigating high-stakes launch decisions. The approach has since been applied across consumer, B2B, and enterprise product launches worldwide.

"To test new products, most companies rely on creating minimum viable products and testing customer feedback, or conducting focus groups or marketing surveys. There's another method companies should try: heat-testing."

— Harvard Business Review, December 2022